The Hidden Architecture Wars Shaping Your Data

From SQL junction tables to ontology-driven typed links, how engineers model relationships defines everything about how software thinks.

Every application you have ever used — your bank's fraud detection engine, the streaming service that knows you better than your therapist, the hospital system quietly tracking drug interactions — rests on a single invisible foundation: the way its data models relationships. Get that architecture right and everything flows. Get it wrong and you inherit a labyrinth of patch queries, broken joins, and circular references that haunt engineering teams for decades. Two dominant philosophies have emerged to solve this problem, and the battle between them is far from settled.

Every application you have ever used — your bank's fraud detection engine, the streaming service that knows you better than your therapist, the hospital system quietly tracking drug interactions — rests on a single invisible foundation: the way its data models relationships. Get that architecture right and everything flows. Get it wrong and you inherit a labyrinth of patch queries, broken joins, and circular references that haunt engineering teams for decades. Two dominant philosophies have emerged to solve this problem, and the battle between them is far from settled.

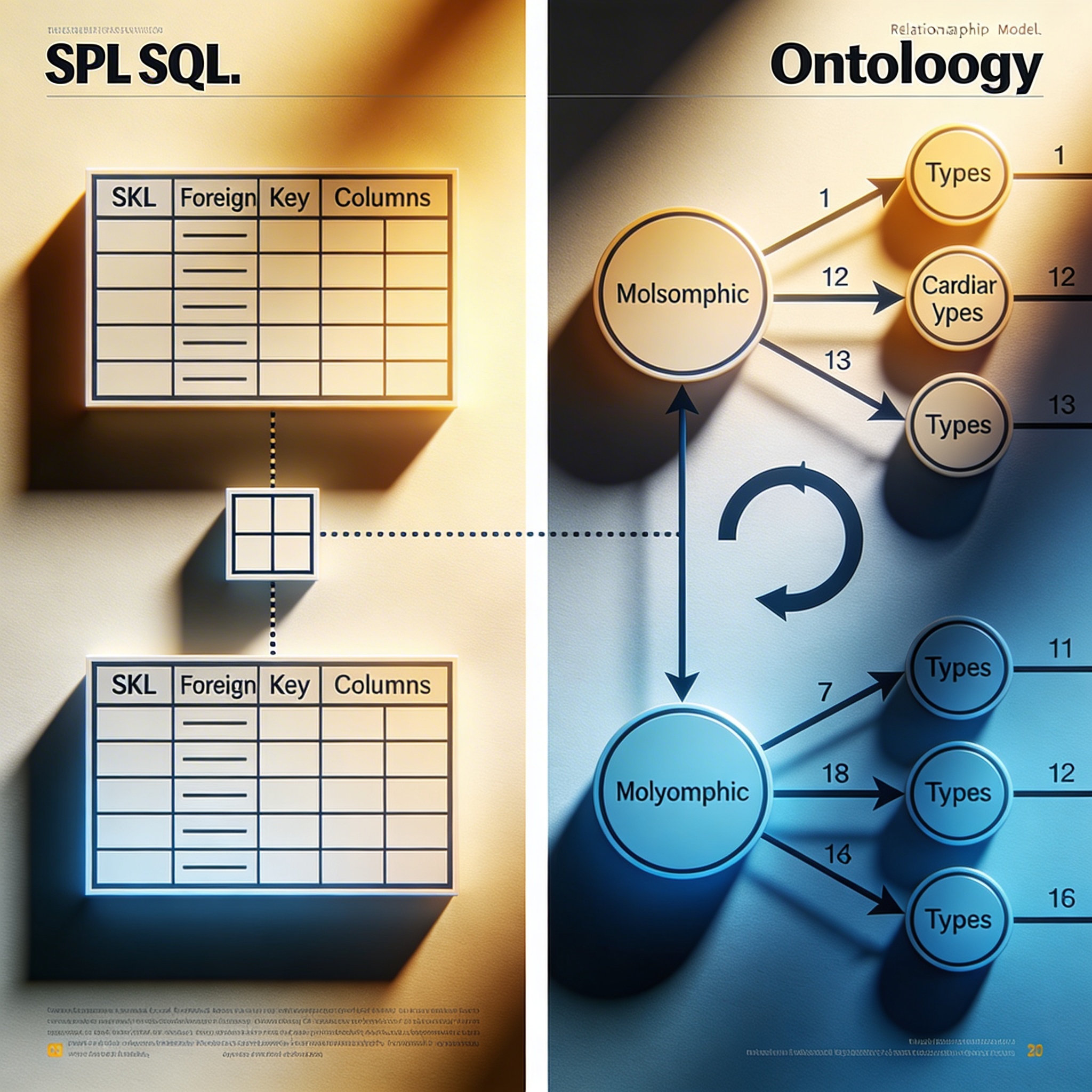

The Relational Backbone — Foreign Keys and the Junction Table Paradigm

The relational database model has been the dominant force in enterprise data architecture since Edgar Codd published his landmark paper in 1970. At its heart lies a deceptively simple mechanism: the foreign key. A foreign key is a column — or a set of columns — in one table that references the primary key of another, creating a navigable, enforceable link between two entities [3]. This constraint is not merely decorative. It is a contract enforced by the database engine itself, ensuring that no orphaned records drift unmoored through your data landscape.

For one-to-many relationships, this mechanism is elegant and sufficient. A single customer can have many orders; each order row carries a customer_id foreign key pointing back to the parent. Clean, fast, indexable. But reality is rarely so cooperative. The world overflows with many-to-many relationships — students enrolled in multiple courses, products belonging to multiple categories, authors contributing to multiple publications — and here the foreign key alone breaks down [2].

The solution the relational world devised is the junction table, sometimes called a bridge table, associative table, or linking table. To model the relationship between students and courses, for example, you introduce a third table — often named something like enrollments — containing at minimum two foreign key columns: one referencing the student, one referencing the course [1]. Together, those two columns typically form a composite primary key, and the junction table becomes the authoritative record of every pairing. Platforms like Stack Overflow's developer community have documented this pattern extensively, noting that the junction table's foreign keys "serve as references to the primary keys of the two original entity tables, establishing the connection" [5].

The power of this approach is its transactional integrity. Relational databases enforce referential integrity at write time. Delete a student and — if cascading rules are configured — the enrollment records vanish cleanly with them. Cardinality, the specification of how many instances of one entity relate to another, is expressed implicitly through schema structure and enforced through constraints [3]. The junction table can also carry its own payload: enrollment date, grade, status — attributes that belong to the relationship itself rather than to either entity alone [6]. This is a quiet but profound capability, transforming a mere link into a first-class data citizen.

"A foreign key tells you that a row in one table points to a row in another — it says nothing about what that pointing means without consulting external documentation."

Ontology's Answer — Typed Directional Links and Semantic Cardinality

Step outside the relational world and into the realm of knowledge graphs, semantic web technologies, and ontology-driven platforms, and the vocabulary shifts dramatically. Here, relationships are not implicit structural artifacts inferred from foreign keys. They are explicit, named, typed, and directional objects in their own right. An ontology defines not just entities and their attributes but the precise nature of every permissible connection between them — a formal specification of a domain's conceptual structure.

In ontological modeling, a link between two entities carries rich metadata by design. Consider a link typed as authoredBy connecting a Document node to a Person node. That link is directional — it flows from document to author, not the reverse — and its type is semantically meaningful, distinguishable from a contributedTo link or a reviewedBy link connecting the same two node types. This is fundamentally different from a SQL foreign key, which is structurally directional but semantically silent. A foreign key tells you that a row in table A points to a row in table B; it says nothing about what that pointing means in human terms without consulting external documentation.

Cardinality in ontology is expressed explicitly and declaratively. Using languages like OWL (Web Ontology Language) or RDF Schema, a data architect can formally assert that a Person may hold exactly one nationality (cardinality of one), may author zero or more documents (minimum cardinality of zero, no upper bound), or must belong to between one and three organizational units (bounded cardinality). These constraints are not just documentation — they are machine-readable axioms that reasoning engines can use to validate data, infer new facts, and detect logical inconsistencies automatically [9].

The practical implications are significant. Organizations like the BBC, Google, and major pharmaceutical companies have adopted ontology-based knowledge graphs precisely because their domains resist the rigid tabular structure of relational models. A drug does not merely "relate to" a disease in one way; it treats, causes, exacerbates, contraindicated-with, and metabolizes-via a constellation of typed relationships, each carrying different cardinality rules and inference implications. No junction table captures that semantic richness without heroic workarounds. The ontological link, by contrast, is built for exactly this complexity — verbose in definition, but precise in meaning.

"The circular reference that breaks a relational cascade rule is simply a loop in a graph, handled with a visited-node check."

Many-to-Many Polymorphism and the Circular Reference Problem

Polymorphic associations represent one of the most revealing stress tests for any data modeling philosophy. In object-oriented programming, polymorphism is celebrated — a single interface, many implementations. In databases, it becomes a source of considerable pain. A polymorphic association exists when a single foreign key column can reference rows in multiple different tables depending on context. A comment, for instance, might belong to a blog post, a video, a product listing, or a user profile — all represented by different tables with different schemas.

In SQL, the classic workaround involves adding a second column alongside the foreign key — typically named something like commentable_type — that stores a string indicating which table the foreign key points to [4]. Rails developers will recognize this as the polymorphic association pattern. It is pragmatic but fragile. The database engine cannot enforce referential integrity across a polymorphic key because it cannot know at constraint-definition time which table to check. The result is a relationship that exists in application logic but is invisible to the database itself, a ghost in the machine that only manual validation can police.

Junction tables can partially address this through careful schema design — creating separate junction tables for each possible association type — but this approach multiplies table count rapidly and scatters relationship logic across the schema in ways that become difficult to reason about at scale [2]. Circular references compound the misery. Consider an employee who manages other employees, or a category that contains subcategories. Self-referential foreign keys handle simple hierarchies, but deeply recursive structures — where A references B, B references C, and C references A — can confuse query optimizers and create deletion-order paradoxes that cascade constraints cannot resolve cleanly.

Ontological models handle polymorphism with considerably more grace. Because every link is typed and every node carries an explicit type declaration, a single relationship type like hasComment can legitimately connect a Comment node to any number of target node types — BlogPost, Video, Product — without ambiguity. The type system governs what connections are permissible; the graph structure records what connections exist. Circular references, meanwhile, are not inherently problematic in a graph model. Graphs are defined mathematically to support cycles, and traversal algorithms — depth-first search, breadth-first search, cycle detection — are native capabilities of graph query languages like SPARQL and Cypher [9]. The circular reference that breaks a relational cascade rule is simply a loop in a graph, handled with a visited-node check.

"The most sophisticated data architectures of the coming decade will speak both languages — relational for integrity, semantic for meaning — bridged by engineers fluent in both."

Choosing Your Weapon — When Each Model Wins

No architecture is universally superior, and the engineers who insist otherwise are usually selling something. The relational model with foreign keys and junction tables remains the right choice for a vast class of problems: transactional systems, financial ledgers, inventory management, user authentication — any domain where data integrity, atomic transactions, and well-understood query patterns dominate the requirements. The ACID guarantees of mature relational databases like PostgreSQL, MySQL, and Oracle are battle-tested across decades and billions of transactions. The junction table pattern, despite its verbosity, is well understood by every working database administrator on the planet, supported by every ORM framework, and documented exhaustively by communities like Stack Overflow [1].

The costs, however, are real and worth naming honestly. Complex many-to-many schemas with polymorphic associations can generate SQL queries of alarming complexity — multi-level joins across five or six tables, with UNION clauses stitching together polymorphic fragments, performance degrading as data volumes grow. Cardinality rules live in application code rather than the database schema, creating a dangerous gap between intention and enforcement [4]. Schema migrations — adding a new relationship type, restructuring a junction table — require careful coordination, downtime windows, and regression testing that ontology-based systems can often avoid through their more flexible graph structures.

Ontology-driven systems shine when the domain is inherently complex, heterogeneous, and evolving. Knowledge graphs built on semantic technologies excel at integrating data from multiple sources with different schemas, inferring implicit knowledge from explicit facts, and supporting queries that traverse unpredictable relationship paths [9]. The pharmaceutical industry's adoption of knowledge graphs for drug discovery, and the intelligence community's use of semantic networks for entity resolution, reflect domains where the richness of typed directional links with formal cardinality constraints delivers capabilities that junction tables simply cannot match.

The decision, ultimately, is not about which model is smarter. It is about which model fits the shape of your problem. Relational databases speak the language of tables, constraints, and transactions. Ontologies speak the language of meaning, inference, and connection. The most sophisticated data architectures of the coming decade will likely speak both — using relational stores for transactional integrity and semantic layers for knowledge representation, bridged by APIs and graph query interfaces that let each technology do what it does best. The engineers who understand both paradigms deeply, who can read a junction table and an OWL axiom with equal fluency, will be the architects of systems that actually work.